Influx Sink¶

Download connector InfluxDB Connector for Kafka 2.1.0

| Blog: | MQTT. Kafka. InfluxDB. SQL. IoT Harmony. |

|---|

This InfluxDB Sink allows you to write events from Kafka to InfluxDB. The connector takes the value from the Kafka Connect SinkRecords and inserts a new entry to InfluxDB.

Prerequisites¶

- Apache Kafka 0.11.x of above

- Kafka Connect 2.x or above

- InfluxDB

- Java 1.8

Features¶

- The KCQL routing querying - Topic to index mapping and Field selection

- Error polices for handling failures

- Payload support for Schema.Struct and payload Struct, Schema.String and JSON payload with no schema.

KCQL Support¶

INSERT INTO influx_measure SELECT { FIELD, ... } FROM kafka_topic WITHTIMESTAMP { FIELD|sys_time() }

Tip

You can specify multiple KCQL statements separated by ; to have a the connector sink multiple topics.

The InfluxDB sink supports KCQL, Kafka Connect Query Language. The following support KCQL is available:

- Field selection

- Target measure selection

- Selection of a field to use a timestamp or system time. The field is expected to be in milliseconds since epoch.

- Ability to set tags.

Example:

-- Insert mode, select all fields from topicA and write to indexA

INSERT INTO measureA SELECT * FROM topicA

-- Insert mode, select 3 fields and rename from topicB and write to indexB, use field Y as the point measurement

INSERT INTO measureB SELECT x AS a, y AS b and z AS c FROM topicB WITHTIMESTAMP y

-- Insert mode, select 3 fields and rename from topicB and write to indexB, use field Y as the current system time for Point measurement

INSERT INTO measureB SELECT x AS a, y AS b and z AS c FROM topicB WITHTIMESTAMP sys_time()

This is set in the connect.influx.kcql option.

Tags¶

InfluxDB allows via the client API to provide a set of tags (key-value) to each point added. The current connector version allows you to provide them via the KCQL

INSERT INTO measure SELECT { FIELDS, ... } FROM kafka_topic [WITHTIMESTAMP { FIELD|sys_time() } ] [WITHTAG(FIELD|(constant_key=constant_value)]

Example:

-- Tagging using constants

INSERT INTO measureA SELECT * FROM topicA WITHTAG (DataMountaineer=awesome, Influx=rulz!)

-- Tagging using fields in the payload. Say we have a Payment structure with these fields: amount, from, to, note

INSERT INTO measureA SELECT * FROM topicA WITHTAG (from, to)

-- Tagging using a combination of fields in the payload and constants. Say we have a Payment structure with these fields: amount, from, to, note

INSERT INTO measureA SELECT * FROM topicA WITHTAG (from, to, provider=DataMountaineer)

Note

At the moment you can only reference the payload fields but if the structure is nested you can’t address nested fields. Support for such functionality will be provided soon. You can’t tag with fields present in the Kafka message key, or use the message metadata(partition, topic, index).

Payload Support¶

Schema.Struct and a Struct Payload¶

If you follow the best practice while producing the events, each message should carry its schema information. The best option is to send AVRO. Your Connector configurations options include:

key.converter=io.confluent.connect.avro.AvroConverter

key.converter.schema.registry.url=http://localhost:8081

value.converter=io.confluent.connect.avro.AvroConverter

value.converter.schema.registry.url=http://localhost:8081

This requires the SchemaRegistry.

Note

This needs to be done in the connect worker properties if using Kafka versions prior to 0.11

Schema.String and a JSON Payload¶

Sometimes the producer would find it easier to just send a message with

Schema.String and a JSON string. In this case your connector configuration should be set to value.converter=org.apache.kafka.connect.json.JsonConverter.

This doesn’t require the SchemaRegistry.

key.converter=org.apache.kafka.connect.json.JsonConverter

value.converter=org.apache.kafka.connect.json.JsonConverter

Note

This needs to be done in the connect worker properties if using Kafka versions prior to 0.11

No schema and a JSON Payload¶

There are many existing systems which are publishing JSON over Kafka and bringing them in line with best practices is quite a challenge, hence we added the support. To enable this support you must change the converters in the connector configuration.

key.converter=org.apache.kafka.connect.json.JsonConverter

key.converter.schemas.enable=false

value.converter=org.apache.kafka.connect.json.JsonConverter

value.converter.schemas.enable=false

Note

This needs to be done in the connect worker properties if using Kafka versions prior to 0.11

Error Polices¶

Landoop sink connectors support error polices. These error polices allow you to control the behavior of the sink if it encounters an error when writing records to the target system. Since Kafka retains the records, subject to the configured retention policy of the topic, the sink can ignore the error, fail the connector or attempt redelivery.

Throw

Any error on write to the target system will be propagated up and processing is stopped. This is the default behavior.

Noop

Any error on write to the target database is ignored and processing continues.

Warning

This can lead to missed errors if you don’t have adequate monitoring. Data is not lost as it’s still in Kafka subject to Kafka’s retention policy. The sink currently does not distinguish between integrity constraint violations and or other exceptions thrown by any drivers or target system.

Retry

Any error on write to the target system causes the RetryIterable exception to be thrown. This causes the Kafka Connect framework to pause and replay the message. Offsets are not committed. For example, if the table is offline it will cause a write failure, the message can be replayed. With the Retry policy, the issue can be fixed without stopping the sink.

Lenses QuickStart¶

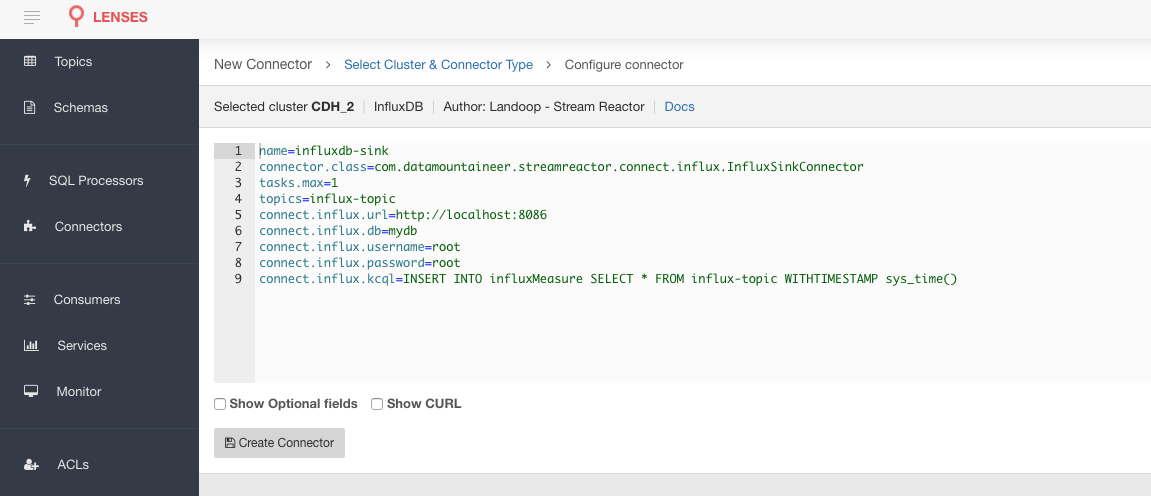

The easiest way to try out this is using Lenses Box the pre-configured docker, that comes with this connector pre-installed. You would need to Connectors –> New Connector –> InfluxDB and paste your configuration

InfluxDB Setup¶

Download and start InfluxDB. Users of OS X 10.8 and higher can install InfluxDB using the Homebrew package manager.

Once brew is installed, you can install InfluxDB by running:

brew update

brew install influxdb

Note

InfluxDB starts an Admin web server listening on port 8083 by default. For this quickstart, this will collide with Kafka Connects default port of 8083. Since we are running on a single node we will need to edit the InfluxDB config.

#create config dir

sudo mkdir /etc/influxdb

#dump the config

influxd config > /etc/influxdb/influxdb.generated.conf

Now change the following section to a port 8087 or any other free port.

[admin]

enabled = true

bind-address = ":8087"

https-enabled = false

https-certificate = "/etc/ssl/influxdb.pem"

Now start InfluxDB.

influxd

If you are running on a single node start InfluxDB with the new configuration file we generated.

influxd -config /etc/influxdb/influxdb.generated.conf

Sink Connector QuickStart¶

Start Kafka Connect in distributed mode (see install).

In this mode a Rest Endpoint on port 8083 is exposed to accept connector configurations.

We developed Command Line Interface to make interacting with the Connect Rest API easier. The CLI can be found in the Stream Reactor download under

the bin folder. Alternatively the Jar can be pulled from our GitHub

releases page.

Test data¶

The Sink expects a database to exist in InfluxDB. Use the InfluxDB CLI to create this:

➜ ~ influx

Visit https://enterprise.influxdata.com to register for updates, InfluxDB server management, and monitoring.

Connected to http://localhost:8086 version v1.0.2

InfluxDB shell version: v1.0.2

> CREATE DATABASE mydb

Installing the Connector¶

Connect, in production should be run in distributed mode

- Install and configure a Kafka Connect cluster

- Create a folder on each server called

plugins/lib - Copy into the above folder the required connector jars from the stream reactor download

- Edit

connect-avro-distributed.propertiesin theetc/schema-registryfolder and uncomment theplugin.pathoption. Set it to the root directory i.e. plugins you deployed the stream reactor connector jars in step 2. - Start Connect,

bin/connect-distributed etc/schema-registry/connect-avro-distributed.properties

Connect Workers are long running processes so set an init.d or systemctl service accordingly.

Starting the Connector (Distributed)¶

Download, and install Stream Reactor. Follow the instructions here if you haven’t already done so. All paths in the quickstart are based in the location you installed the Stream Reactor.

Once the Connect has started we can now use the kafka-connect-tools cli to post in our distributed properties file for InfluxDB. If you are using the dockers you will have to set the following environment variable too for the CLI to connect to the Kafka Connect Rest API.

export KAFKA_CONNECT_REST="http://myserver:myport"

➜ bin/connect-cli create influx-sink < conf/influxdb-sink.properties

name=influxdb-sink

connector.class=com.datamountaineer.streamreactor.connect.influx.InfluxSinkConnector

tasks.max=1

topics=influx-topic

connect.influx.url=http://localhost:8086

connect.influx.db=mydb

connect.influx.kcql=INSERT INTO influxMeasure SELECT * FROM influx-topic WITHTIMESTAMP sys_time()

If you switch back to the terminal you started Kafka Connect in, you should see the InfluxDB Sink being accepted and the task starting.

We can use the CLI to check if the connector is up but you should be able to see this in logs as well.

#check for running connectors with the CLI

➜ bin/connect-cli ps

influxdb-sink

INFO

__ __

/ / ____ _____ ____/ /___ ____ ____

/ / / __ `/ __ \/ __ / __ \/ __ \/ __ \

/ /___/ /_/ / / / / /_/ / /_/ / /_/ / /_/ /

/_____/\__,_/_/ /_/\__,_/\____/\____/ .___/

/_/

___ __ _ ____ _ ____ _ _ by Stefan Bocutiu

|_ _|_ __ / _| |_ ___ _| _ \| |__ / ___|(_)_ __ | | __

| || '_ \| |_| | | | \ \/ / | | | '_ \ \___ \| | '_ \| |/ /

| || | | | _| | |_| |> <| |_| | |_) | ___) | | | | | <

|___|_| |_|_| |_|\__,_/_/\_\____/|_.__/ |____/|_|_| |_|_|\_\

(com.datamountaineer.streamreactor.connect.influx.InfluxSinkTask:45)

Test Records¶

Tip

If your input topic doesn’t match the target use Lenses SQL to transform in real-time the input, no Java or Scala required!

Now we need to put some records into the influx-topic topics. We can use the kafka-avro-console-producer to do this.

Start the producer and pass in a schema to register in the Schema Registry. The schema has a company field of type

string a address field of type string, a latitude field of type int and a longitude field of type int.

bin/kafka-avro-console-producer \

--broker-list localhost:9092 --topic influx-topic \

--property value.schema='{"type":"record","name":"User",

"fields":[{"name":"company","type":"string"},{"name":"address","type":"string"},{"name":"latitude","type":"float"},{"name":"longitude","type":"float"}]}'

Now the producer is waiting for input. Paste in the following:

{"company": "DataMountaineer","address": "MontainTop","latitude": -49.817964,"longitude": -141.645812}

Check for records in InfluxDB¶

Now check the logs of the connector you should see this:

INFO Setting newly assigned partitions [influx-topic-0] for group connect-influx-sink (org.apache.kafka.clients.consumer.internals.ConsumerCoordinator:231)

INFO Received 1 record(-s) (com.datamountaineer.streamreactor.connect.influx.InfluxSinkTask:81)

INFO Writing 1 points to the database... (com.datamountaineer.streamreactor.connect.influx.writers.InfluxDbWriter:45)

INFO Records handled (com.datamountaineer.streamreactor.connect.influx.InfluxSinkTask:83)

Check in InfluxDB.

✗ influx

Visit https://enterprise.influxdata.com to register for updates, InfluxDB server management, and monitoring.

Connected to http://localhost:8086 version v1.0.2

InfluxDB shell version: v1.0.2

> use mydb;

Using database mydb

> show measurements;

name: measurements

------------------

name

influxMeasure

> select * from influxMeasure;

name: influxMeasure

-------------------

time address async company latitude longitude

1478269679104000000 MontainTop true DataMountaineer -49.817962646484375 -141.64581298828125

Error Polices¶

The Sink has three error policies that determine how failed writes to the target database are handled. The error policies affect the behavior of the schema evolution characteristics of the sink. See the schema evolution section for more information.

Throw

Any error on write to the target database will be propagated up and processing is stopped. This is the default behavior.

Noop

Any error on write to the target database is ignored and processing continues.

Warning

This can lead to missed errors if you don’t have adequate monitoring. Data is not lost as it’s still in Kafka subject to Kafka’s retention policy. The Sink currently does not distinguish between integrity constraint violations and or other exceptions thrown by drivers.

Retry

Any error on write to the target database causes the RetryIterable exception to be thrown. This causes the Kafka Connect framework to pause and replay the message. Offsets are not committed. For example, if the table is offline it will cause a write failure, the message can be replayed. With the Retry policy, the issue can be fixed without stopping the sink.

The length of time the Sink will retry can be controlled by using the connect.influx.max.retries and the

connect.influx.retry.interval.

Configurations¶

The Kafka Connect framework requires the following in addition to any connectors specific configurations:

| Config | Description | Type | Value |

|---|---|---|---|

name |

Name of the connector | string | This must be unique across the Connect cluster |

topics |

The topics to sink.

The connector will check that this matches the KCQL statement

|

string | |

tasks.max |

The number of tasks to scale output | int | 1 |

connector.class |

Name of the connector class | string | com.datamountaineer.streamreactor.connect.influx.InfluxSinkConnector |

Connector Configurations¶

| Config | Description | Type |

|---|---|---|

connect.influx.kcql |

Kafka connect query language expression.

Allows for an expressive topic to table routing,

field selection, and renaming. For InfluxDB it allows

either setting a default or selecting a field from

the topic as the Point measurement

|

string |

| connect.influx.url` | The InfluxDB database url | string |

connect.influx.db |

The InfluxDB database | string |

Optional Configurations¶

| Config | Description | Type | Default |

|---|---|---|---|

connect.influx.username |

The InfluxDB username | string | |

connect.influx.password |

The InfluxDB password | string | |

connect.influx.consistency.level |

Specifies the write consistency.

If any write operations do not meet the

configured consistency guarantees,

an error will occur and the data will

not be indexed. The default consistency-level is ALL.

Other available options are ANY, ONE, QUORUM

|

string | ALL |

connect.influx.retention.policy |

Determines how long InfluxDB keeps

the data - the options for specifying the duration of

the retention policy are listed below. Note that the

minimum retention period is one hour. DURATION

determines how long InfluxDB keeps the

data - the options for specifying the duration

of the retention policy are listed below.

Note that the minimum retention period is one hour.

m minutes

h hours

d days

w weeks

INF infinite

Default retention is autogen from 1.0 onwards or

default for any previous version

|

string | autogen |

connect.influx.error.policy |

Specifies the action to be

taken if an error occurs while inserting the data.

There are three available options, NOOP, the error

is swallowed, THROW, the error is allowed

to propagate and retry.

For RETRY the Kafka message is redelivered up

to a maximum number of times specified by the

connect.influx.max.retries option |

string | THROW |

connect.influx.max.retries |

The maximum number of times a message

is retried. Only valid when the

connect.influx.error.policy is set to RETRY |

string | 10 |

connect.influx.retry.interval |

The interval, in milliseconds between retries,

if the sink is using

connect.influx.error.policy set to RETRY |

string | 60000 |

connect.progress.enabled |

Enables the output for how many

records have been processed

|

boolean | false |

Example¶

name=influxdb-sink

connector.class=com.datamountaineer.streamreactor.connect.influx.InfluxSinkConnector

tasks.max=1

topics=influx-topic

connect.influx.db=mydb

connect.influx.url=http://localhost:8086

connect.influx.kcql=INSERT INTO influxMeasure SELECT * FROM influx-topic WITHTIMESTAMP sys_time()

Schema Evolution¶

Upstream changes to schemas are handled by Schema registry which will validate the addition and removal or fields, data type changes and if defaults are set. The Schema Registry enforces AVRO schema evolution rules. More information can be found here.

Kubernetes¶

Helm Charts are provided at our repo, add the repo to your Helm instance and install. We recommend using the Landscaper to manage Helm Values since typically each Connector instance has its own deployment.

Add the Helm charts to your Helm instance:

helm repo add landoop https://lensesio.github.io/kafka-helm-charts/

TroubleShooting¶

If you see the below error:

you can't select all the fields because 'fields' is resolving to a type: 'java.util.hashmap' which is not supported by InfluxDB API

Then your incoming message is nested, and you have to flatten it with KCQL i.e

{

"fields": {

"free": 473169920,

"total": 3221225472,

"used": 2748055552,

"used_percent": 85.31087239583334

},

"name": "swap",

"tags": {

"dc": "us-east-1",

"host": "Viveks-MacBook-Pro.local",

"rack": "1a"

},

"timestamp": 1511521090

}

SELECT * will result in inserting the nested fields. This is not allowed as InfluxDb point builder API allows only primitive types

What you want is a KCQL like this:

INSERT INTO influxMeasure

SELECT fields.free, fields.total, fields.used, fields.used_percent

FROM fleet WITHTIMESTAMP sys_time()

WITHTAG ( tags.dc, tags.host, tags.rack)

Please review the FAQs and join our slack channel