Alertmanager Integration¶

Lenses comes with an alerting subsystem that can be tailored to individual needs. More information about the alerting subsystem can be found at the user guide section.

For an alerting system to be complete, there is usually the requirement for alerts management and notifications. In simple terms there has to be a way for an alert to reach the proper team within the proper timeframe whilst not overwhelming the team with alerts it hasn’t ownership of, duplicates or are a byproduct of a top level alert. As such, Lenses integrates with the Alertmanager software which provides alerts deduplication, grouping and routing via various backends (such as email, pagerduty, slack) as well as silencing and inhibition.

Lenses Configuration¶

To configure the Alertmanager integration, just a couple options are needed. The only mandatory option is the Alertmanager endpoint setting. Alertmanager clusters (multiple endpoints) are well supported:

lenses.alert.manager.endpoints="http://alertmanager.1.url:9093,http://alertmanager.2.url:9093"

A useful option is the generator URL, where the Lenses address should be set. That way alerts will include a link to Lenses, which the recipient can use to quickly navigate to the web interface:

lenses.alert.manager.generator.url="http://lenses.url:9991"

For the complete list of reference options, please visit the configuration reference.

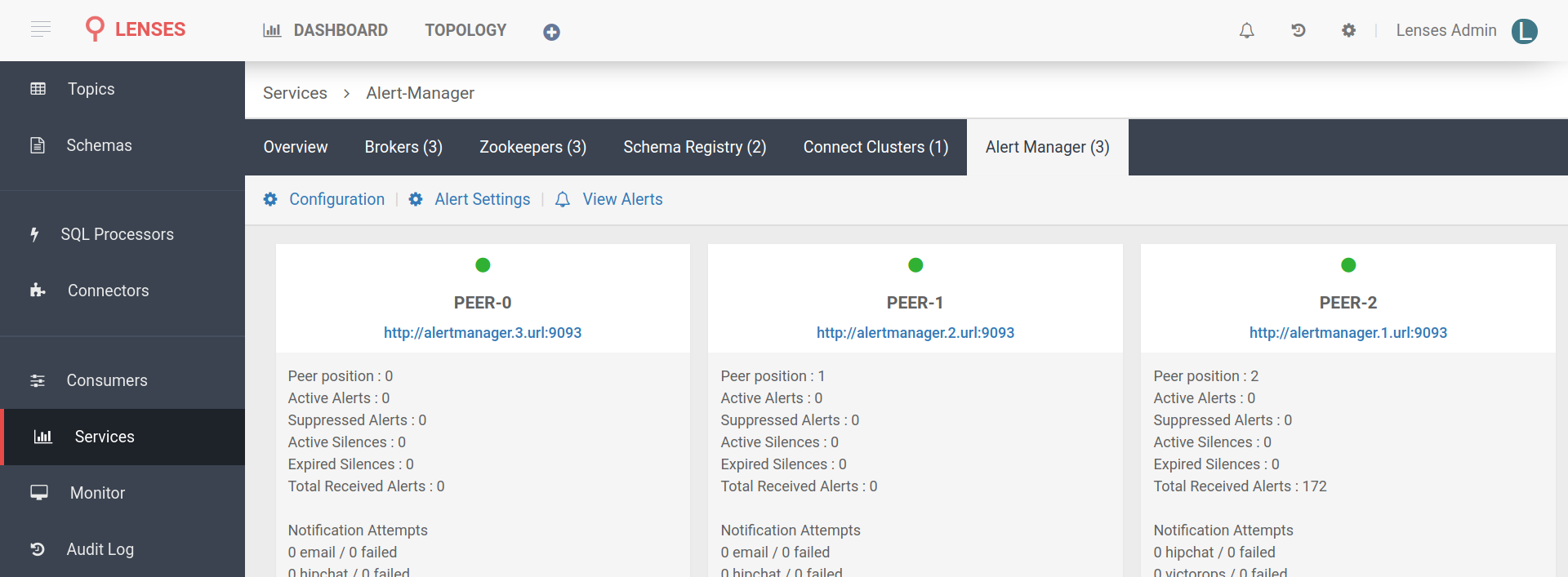

Lenses™ | Alertmanager Service Status

Alerts Attributes¶

Although alertmanager inner workings and configuration is beyond the scope of this guide, it is useful to briefly go into some of the details, so it will be easier to get the most out of this feature.

Alerts are posted to alertmanager as JSON objects. Each alert has a set of labels and a set of annotations. The set of labels is what uniquely identifies the alert, whilst the annotations serve as further elaborate descriptions of the event.

Alertmanager can use the set of labels in order to deduplicate, group, route, silence and inhibit alerts, whilst the annotations (and a field called generatorURL) can be sent, along with the labels, to a recipient to help quickly understand the issue.

Lenses alerts offer these main labels [1]:

| label name | description | values |

|---|---|---|

| category | the category of the alert | Infrastructure, Consumers, Kafka Connect, Topics |

| instance | the URL or the subsystem that triggered the event | can be the address of a broker, a description like UnderReplication, etc |

| severity | the severity of the event | INFO, MEDIUM, HIGH, CRITICAL [2] |

| [1] | There are more labels actually but vary by instance. Only these three are present in all alerts and can be considered stable, so that the alertmanager configuration may be built around them. |

| [2] | There is also a LOW level but it is not in use currently. |

Lenses alerts have these annotations:

| annotation name | description | values |

|---|---|---|

| source | the source of the event | default is Lenses unless configured otherwise |

| summary | the summary of the event | depends on the alert |

Alertmanager Example¶

In the example below, alertmanager is configured with three receivers: default, urgent and emergency. There are three backends available as well: email, slack and pushover. The default receiver sends events only to slack. The urgent receiver sends events to both email and slack, whilst the emergency receiver sends to all three backends.

The routing rules send Infrastructure category events of HIGH or

CRITICAL severity to the emergency receiver so the team can receive push

notifications to their mobile phones and act immediately. The rest of the

categories of events of HIGH or CRITICAL severity are sent to the

urgent receiver, so the proper team member can get email notifications. At

last, all other events (events that didn’t match a routing rule) will be sent to

the default receiver, which will post them to a slack channel, where a member

of the team can look at a time of convenience.

Also two inhibition rules are set. If a CRITICAL alert is triggered,

alertmanager will not sent notifications for any other events until the

CRITICAL issue is resolved. This is because the main problem (maybe a

broker can no more serve requests) will cause more problems. Team members should

not be flooded with notifications but rather get one notification for the root

cause. The second inhibition rule applies to events of severity

HIGH. In that case events from the same instance but with lower severity

will be inhibited until the main alert for this instance is resolved.

For the Slack notification a custom text is set, which includes the summary, source and generatorURL.

global:

slack_api_url: https://hooks.slack.com/services/XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX

smtp_from: alertmanager@example.com

smtp_smarthost: smtp.example.com:25

smtp_auth_username: SMTP_USER

smtp_auth_password: SMTP_PASS

route:

# If an alert does not match any rule, it goes to the default:

receiver: 'default'

group_wait: 30s

group_interval: 5m

repeat_interval: 4h

group_by: [category,severity]

routes:

- receiver: emergency

match_re:

severity: HIGH|CRITICAL

category: Infrastructure

- receiver: urgent

match:

severity: HIGH|CRITICAL

inhibit_rules:

- source_match:

severity: 'CRITICAL'

target_match_re:

severity: 'INFO|MEDIUM|HIGH'

equal: ['source']

- source_match:

severity: 'HIGH'

target_match_re:

severity: 'INFO|MEDIUM'

equal: ['instance','source']

receivers:

- name: 'default'

slack_configs:

- channel: alerts

send_resolved: true

text: {% raw %}"{{ range .Alerts }}{{ .Labels.instance }}: {{ .Annotations.summary }}.\nVia: {{ .Annotations.source }}\nGenerator: {{ .GeneratorURL }}\n{{ end }}"{% endraw %}

- name: 'urgent'

email_configs:

- to: 'user1@example.com, user2@example.com, user3@example.com'

slack_configs:

- channel: alerts

send_resolved: true

text: {% raw %}"{{ range .Alerts }}{{ .Labels.instance }}: {{ .Annotations.summary }}.\nVia: {{ .Annotations.source }}\nGenerator: {{ .GeneratorURL }}\n{{ end }}"{% endraw %}

- name: 'emergency'

pushover_configs:

- user_key: xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx

token: xxxxxxxxxxxxxxxxxxxxxxxxxxxxxx

expire: 2m

email_configs:

- to: 'user1@example.com, user2@example.com, user3@example.com'

slack_configs:

- channel: alerts

send_resolved: true

text: {% raw %}"{{ range .Alerts }}{{ .Labels.instance }}: {{ .Annotations.summary }}\nVia: {{ .Annotations.source }}\nGenerator: {{ .GeneratorURL }}\n{{ end }}"{% endraw %}