Producing Data¶

A Kafka producer (data generator) inserts data into a Kafka topic. In this page, you will learn how to create a Kafka producer using Lenses Box with Kafka Connect.

Creating a Source Connector¶

As you already saw, it is really easy to connect to the Kafka server process that is running in the Lenses Box Docker container and make sure that everything is OK. Now, you are going to learn how to create a new Source Connector.

This page will show how to create a producer using the File Source Connector, which allows you to read a text file line by line and insert the data into a Kafka topic.

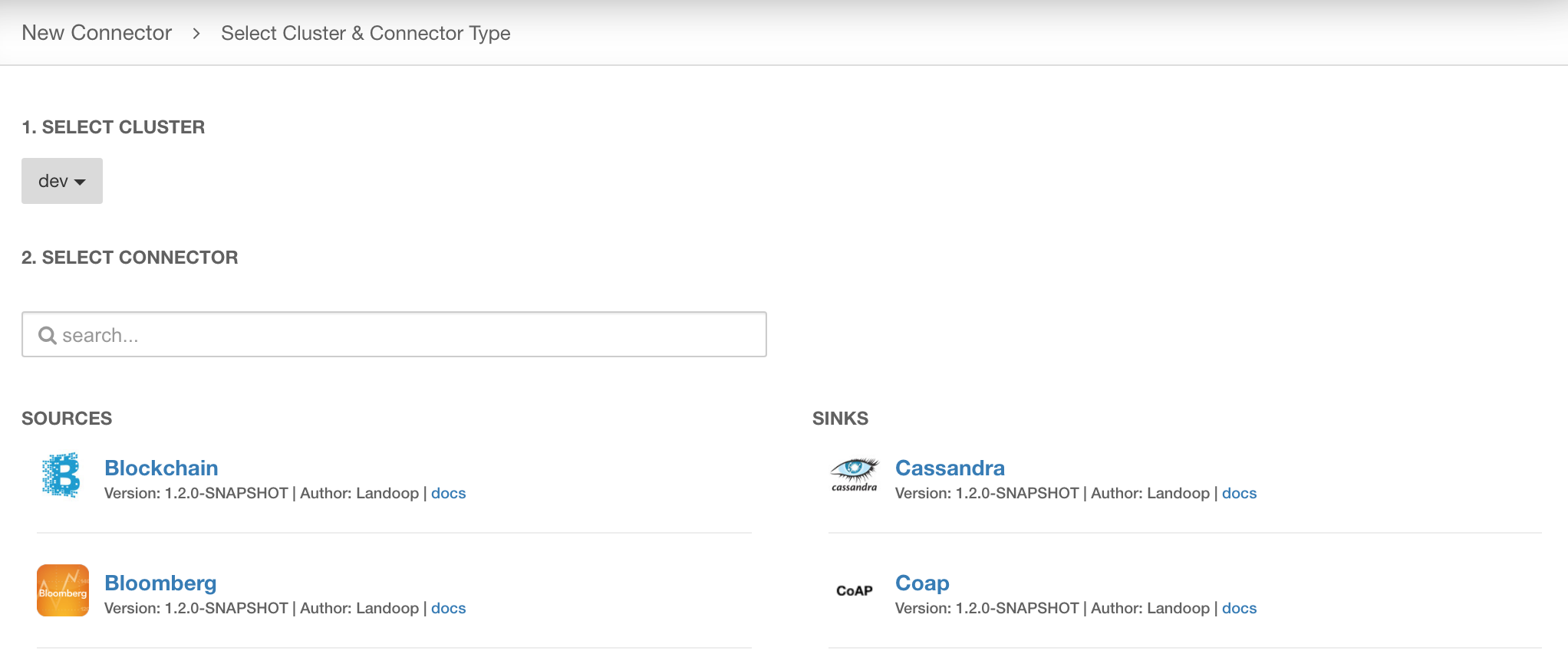

First, click Connectors on the left menu and then click on the + New Connector link in the upper right corner of the page. The next page will look like the following:

Two columns are shown, one column with Sources and another column with Sinks. We are interested in the left column, the Sources. In this case you will need to find a Source named File, which allows you to use a plain text file as your Kafka source.

You will need to setup the Source Connector and provide the necessary parameters to it, including the name of the new Connector, the file path of the input file and the topic that the contents of the file will be written to. To view all the mandatory parameters click on the Show Optional fields box, which will be renamed to Show only Required fields once clicked.

The final implementation of the Source Connector will be as follows:

name=file-connector

connector.class=org.apache.kafka.connect.file.FileStreamSourceConnector

tasks.max=1

key.converter=

value.converter=

header.converter=

transforms=

config.action.reload=RESTART

errors.log.include.messages=false

file=/var/log/broker.log

topic=var_log_broker

batch.size=2000

So, what is happening here? We have just created a new connector named file-connector

that will a new topic named var_log_broker.

Notice that the file path used (/var/log/broker.log) is referring to the filesystem

of the Lenses Box Docker image that is running and not on your local machine.

The Lenses Box Docker image includes various log files apart from the one used here. You can find the list of log files as follows:

$ docker exec -it lenses-dev bash

First login. Configuring lenses-cli.

root@fast-data-dev / $ ls -l /var/log/

total 1060

-rw-r--r-- 1 root root 267248 Mar 27 19:38 broker.log

-rw-r--r-- 1 root root 9970 Mar 27 19:40 caddy.log

-rw-r--r-- 1 root root 415783 Mar 27 19:40 connect-distributed.log

-rw-r--r-- 1 root root 790 Mar 27 18:59 lenses-processor.log

-rw-r--r-- 1 root root 27628 Mar 27 19:40 lenses.log

-rw-r--r-- 1 root root 11923 Mar 27 19:00 logs-to-kafka.log

-rw-r--r-- 1 root root 11978 Mar 27 18:59 nullsink.log

-rw-r--r-- 1 root root 25830 Mar 27 19:40 running-ais.log

-rw-r--r-- 1 root root 14130 Mar 27 18:58 running-cc-data.log

-rw-r--r-- 1 root root 18375 Mar 27 19:37 running-cc-payments.log

-rw-r--r-- 1 root root 28768 Mar 27 19:40 running-financial-tweets.log

-rw-r--r-- 1 root root 14408 Mar 27 19:40 running-reddit.log

-rw-r--r-- 1 root root 30327 Mar 27 19:40 running-smart.log

-rw-r--r-- 1 root root 12140 Mar 27 19:40 running-taxis.log

-rw-r--r-- 1 root root 25705 Mar 27 19:40 running-telecom-italia.log

-rw-r--r-- 1 root root 76597 Mar 27 19:40 schema-registry.log

-rw-r--r-- 1 root root 5441 Mar 27 19:00 supervisord.log

-rw-r--r-- 1 root root 28110 Mar 27 19:40 zookeeper.log

However, nothing prohibits you from using your own plain text files.

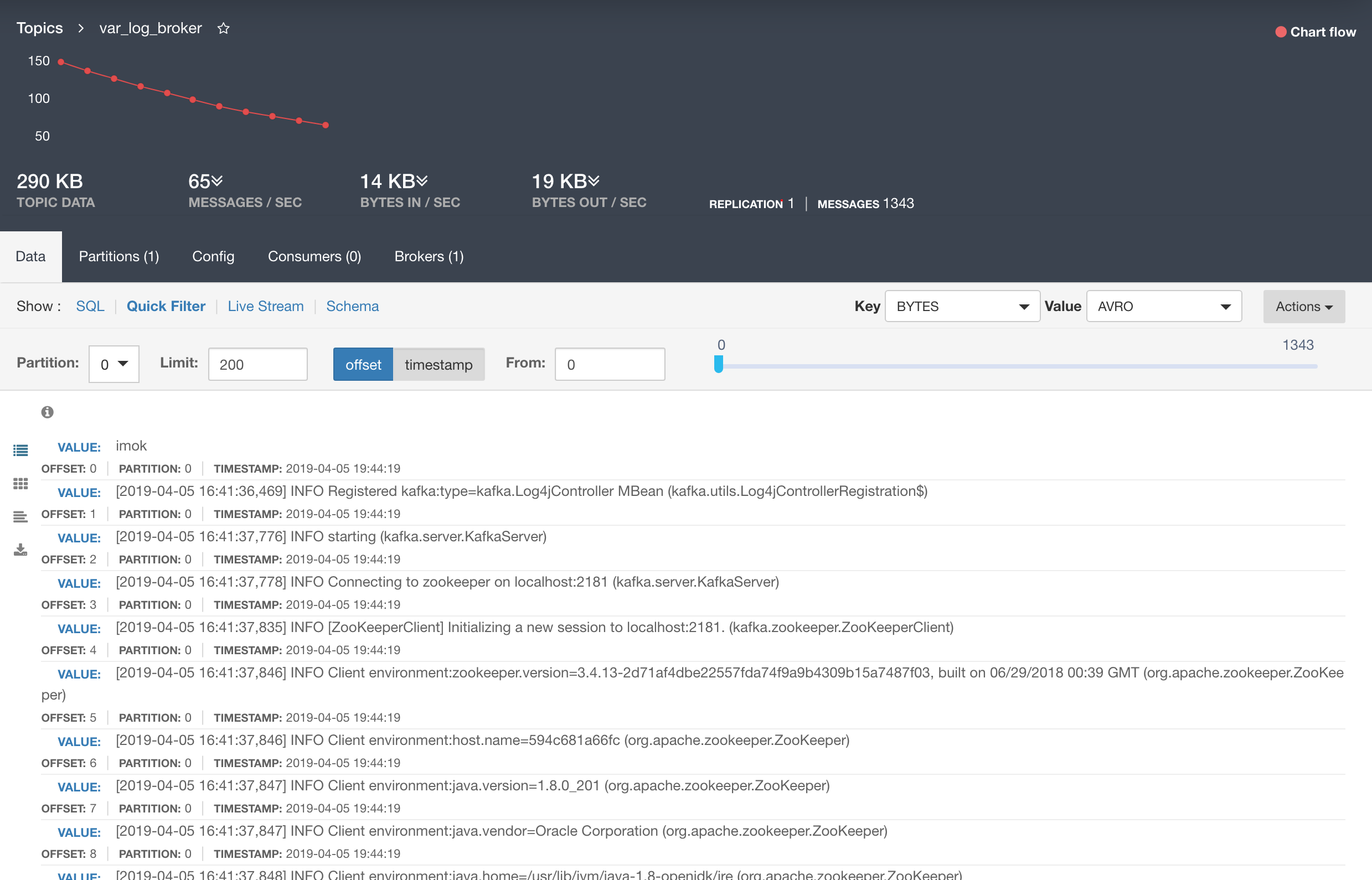

Checking Kafka for data¶

Two main ways to make sure that your producers are writing data to Kafka. The first one is by checking Lenses UI, find your topic and look at the data of the topic. The second one is by connecting to the Kafka server process of the Docker image as you saw in Docker page. The Lenses web browser UI is by far the easiest and quickest to use.

Using the UI of Lenses, you can see that the contents of the var_log_broker

topic will look similar to the following:

Congratulations, you have just inserted data into Kafka using a Kafka connector and a Lenses box!